- Top Results

- Bosch Building Technologies

- Security and Safety Knowledge

- Security: Video

- What is virtualization? - A concept explained

What is virtualization? - A concept explained

- Subscribe to RSS Feed

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Question

What is virtualization?

Answer

Introduction

This article describes the basic concept of virtualization. It gives the reader an insight into the technology itself, as well as the applications and benefits. It also puts the technology into the perspective of the safety and security industry.

Running software on top of a virtual infrastructure increases the complexity of the troubleshooting process. Bosch after-sales support teams might ask you to prove the resources assigned to the virtual machine match the resources described in the datasheet of the specific software. Please note Bosch after-sales support teams cannot troubleshoot performance issues of the virtual infrastructure itself, but can only indicate a lack of resources exist.

What is virtualization?

This section introduces the concept of virtualization. It explains three basic terms (hypervisor, virtual machine and system management) and should allow the reader to understand what virtualization is.

Hypervisor

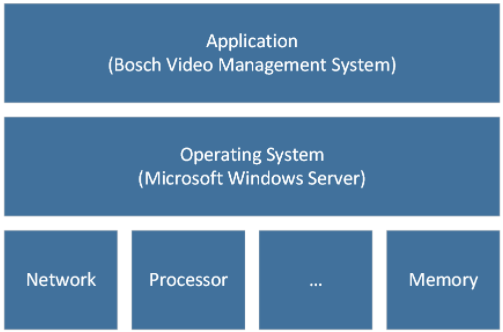

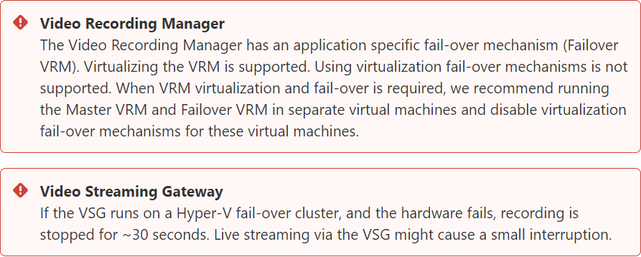

Virtualization introduces an additional layer between the operating system (for example, Windows, or Linux) and the hardware. This layer is called a “hypervisor”. The image below shows a traditional system configuration, without hypervisor.

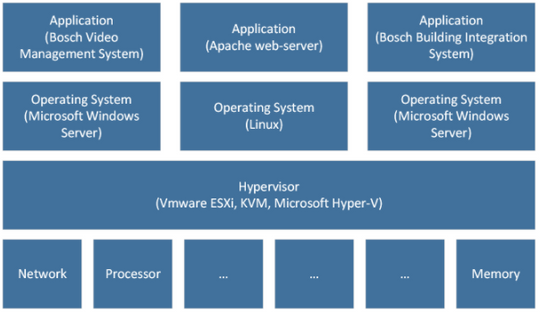

A hypervisor exposes, shares, and distributes hardware resources to an additional layer of virtual operating systems (shown in the image below) or, “virtual machines”.

The hypervisor controls how much resources a virtual machine can claim. For example, when a physical machine is equipped with 16 Gigabyte of memory, the hypervisor can be configured to expose 8 Gigabyte to the first virtual machine, 4 Gigabyte to a second virtual machine, and another 4 Gigabyte to the third virtual machine. A similar concept can be applied for processing power.

Such a configuration limits the flexibility of resource consumption a lot. That is why most hypervisors are equipped with advanced time-sharing mechanisms. As a result, they have a “pool” of hardware resources (processing power, memory, etc…) and allocate this to the virtual machines on the fly. Even some overbooking can be applied: if virtual machine 1 consumes 80% of all processing power during the night, and virtual machine 2 consumes 80% of processing power during the day, the hypervisor will ensure that both virtual machines get access to the resources they need. This does introduce an additional challenge: the pool of resources needs to be big enough to ensure that all virtual machines can consume the resources they require, at the time they require it.

The management tasks of the hypervisor also consume some resources of the physical machine. The amount of this "overhead" depends on the efficiency of the hypervisor and the deployed functionality.

Virtual machines

A virtual machine is a "computer". It has one or multiple (virtual) processors, (virtual) memory, and (virtual) hard disk drives. Instead of communicating directly with this hardware, the virtual machine communicates with the hypervisor. However, the virtual machine itself is not aware of this and will act mostly the same when its behavior is compared to a physical machine. This means that a virtual machine has a BIOS which allows some low-level configuration, and an operating system (for example, Windows, or Linux) can be installed, on top of which applications (like the BVMS and BIS) can run.

System management

When multiple, physical, systems are running the same hypervisor, additional use-cases can be deployed. One of these use-cases is to increase the reliability of the environment. Examples of other use-cases include the ease of system maintenance and monitoring. To allow these additional use-cases the environment needs to be managed by a single entity: a management service. This management service monitors the hypervisor and the underlying hardware, and can control the environment (for example, for example, pushing configuration).

From an architectural perspective this concept is similar to the tasks of a video management system, but instead of managing cameras and storage devices, the virtual management system manages hypervisors and virtual machines.

Usage of virtualization

The technology is, of course, very interesting. But why would we introduce all of this complexity into an IT environment which is already complex on its own? In other words: why would we use the concept of virtualization at all?

Virtualization increases system efficiency

The usage of computer-based systems has grown tremendously in the last couple of decades. This is not only related to the increasing usage of the internet, businesses are deploying more computer-based systems for all kinds of applications, for example video surveillance. Ideally, all of these applications are hosted on separate machines. This makes it easier to manage and maintain them. Next to that, the decoupling of applications reduces the risk that one application affects other applications and causes problems. The decoupling of applications brings a couple of challenges:

- Due to the ever increasing performance, hardware can offer much more processing power than that is required by most applications. Depending on the performance and application, an average processor consumption of 5-7% is assumed. As a result, 93% of processing power is wasted.

- Having a dedicated hardware device for each application increases the general costs of operating an infrastructure. Each hardware device needs its dedicated network and power connections. It consumes power (that needs to be cooled) and takes up space in a rack.

- In mission-critical environments the impact is even bigger: hot-standby or cold-standby systems are more or less waiting for a component to fail and are not used for anything else.

Virtualization enables multiple applications to run on a single hardware device, while still being (logically) decoupled from each other.

Virtualization decreases hardware costs

In essence virtualization enables a more effective use of the available computing power: instead of consuming 5-7% over 20 physical servers, we can consume 20-35% of computing power over 5 physical servers. This, of course, depends on the nature of the application(s) that is running in the environment. In this example this results in a reduction of 15 servers: purchasing, power, cooling, network connectivity and maintenance are all reduces with 75%!

Most hypervisors are free of charge, so licensing costs are not increased on the hypervisor. Operating system licenses also need to be purchased and maintained for physical machines, no difference in this area. However, the additional management software (which is mostly forgotten, Microsoft also charges to use System Center while the hyper-v hypervisor is more or less free of charge) does increase the licensing costs considerably. The additional complexity also requires time, training and experience from IT management staff.

Virtualization can increase system reliability

Due to the constant monitoring of the management service, it knows that state of the environment and can act when failures occur. The most common functionality, shared across different platforms, is explained in this section.

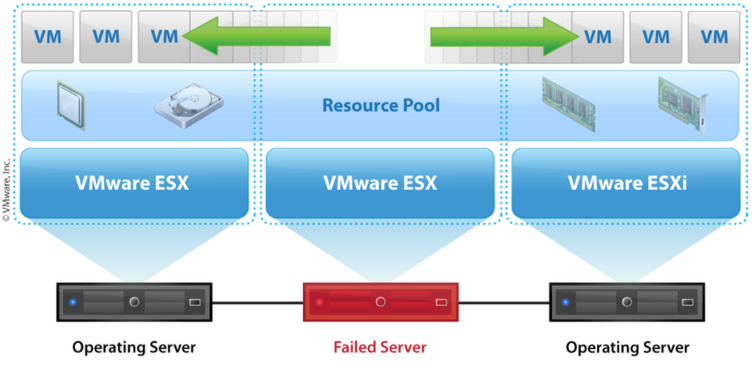

Active-Passive (High-Availability)

Let's assume one physical server is running 3 virtual servers. Both the physical server and virtual servers send a "heartbeat" (monitoring signal) to the management service. The environment is built with the assumption one physical server can fail, and has the available computing resources to catch such a failure. When the physical server fails, the heart beat of the physical server as well as the virtual machines is not received by the management service any more. The management services immediately reacts and boots up three new instances of the virtual machines and spreads these virtual machines over other physical servers, depending on the available capacity. The downtime of the virtual machines is mostly limited to a minute or two, depending on the time it takes to start the operating system and the services running on top of the operating system.

The image below shows the VMware concept. VMware ESX represents the hypervisor in this image. Microsoft and Stratus offer similar functionality.

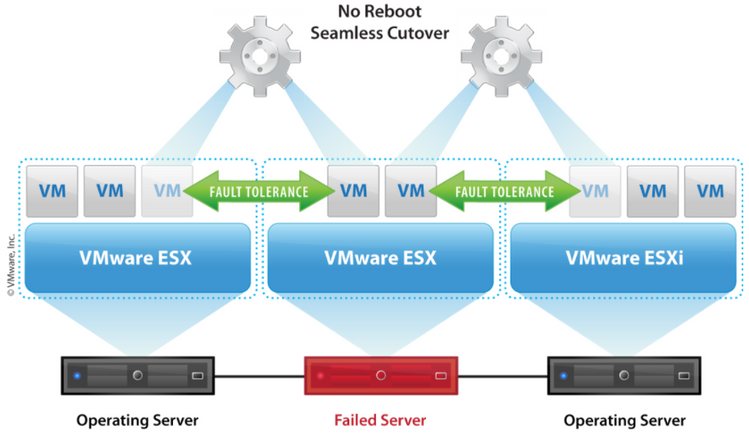

Active-Active (Fault-Tolerance)

When the application is critical and the downtime, which is caused by the active-passive method is not accepted, an active-active scenario can be deployed. When a virtual machine is started, the hypervisor sets up a session with a second hypervisor and uses this session to synchronize the full state of the virtual machine between the two hypervisors. When the physical server fails, the state of the virtual machine is fully synchronized to a second physical server, which will seamlessly take over the responsibilities of the failed server. As a result, there is no downtime of the virtual machine, or application(s) running on top.

The image below shows the VMware concept. VMware ESX represents the hypervisor in this image. Stratus offers similar functionality. Microsoft does currently not offer this as part of Hyper-V.

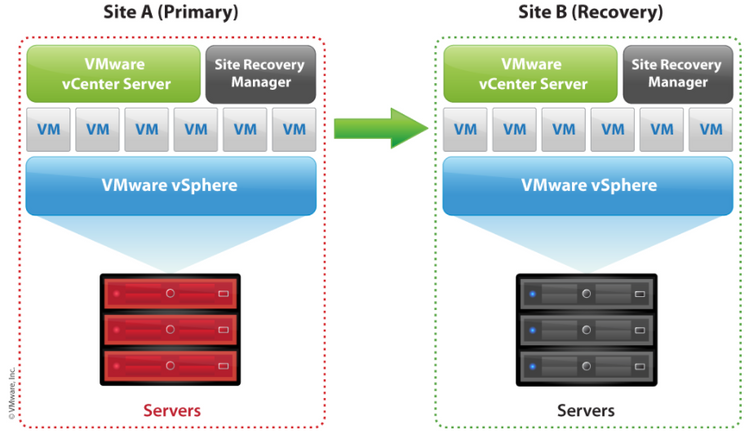

Disaster recovery

Both the active-active and active-passive solutions are mostly used within the context of one physical locations. This is related to the infrastructure requirements these solutions need to operate. When this single location "burns down", the environment cannot be easily restored. By replicating the state of the virtual machines to another location, services can easily be restored with limited downtime.

The image below shows the VMware concept. VMware ESX represents the hypervisor in this image. Microsoft offers similar functionality in Hyper-v.

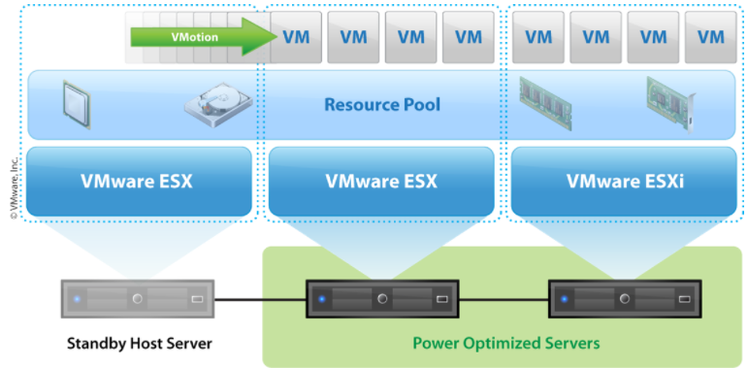

Virtualization will ease system maintenance

As mentioned before, most virtualization environments offer an additional management layer. This management layer does not only offer an increased system availability, but also decreases the system maintenance, especially in larger environments. The management layer is able to offer unified interface towards the physical servers and virtual servers. This makes enables system administrators to automate management tasks, such as the deployment of new servers. It also enables them to execute maintenance on physical systems without any downtime. These are just a few examples of management benefits.

The image below shows the VMware concept. VMware ESX represents the hypervisor in this image. Microsoft offers similar functionality in Hyper-v.

Virtualization decreases power consumption

During off-peak hours the resource consumption of the environment could, depending on the applications, go down. As a result, the entire environment will have an overcapacity of computing resources. To mitigate this overcapacity the management layer is able to re-order the virtual machines on the physical servers and shut-down physical servers which are not needed any more. Once the computing resource consumption slowly starts to increase, the physical servers are powered up again and added to the pool of resources. This is especially useful in bigger environments, which have predictable peaks in resource consumption.

Available products

Virtualization is at its peak, and at this moment still dominated by VMware. Microsoft is catching up, while other nice players are focussing on offering solutions for specific market segments. One example is area Stratus, a company which prevents critical business applications from failing. All of these systems are focussed on on-premise (or private-cloud) environments. At the same time Microsoft (Azure), Amazon (Amazon Web Services) and VMware (vCloud) are growing their public cloud offering. Due to the nature of safety and security systems, this article focusses on the on-premise products offerings of these vendors.

|

Virtualization product

|

BVMS

|

VRM

|

VSG

|

MVS

|

BIS

|

AMS

|

|---|---|---|---|---|---|---|

| VMware virtualization | Yes | Yes | Not tested | Yes | Yes | Yes |

| VMware high availability | Yes | Not tested | Not tested | Not tested | Not tested | Not tested |

| VMware fault tolerance | Yes | No | Not tested | Not tested | Not tested | Not tested |

| Hyper-V virtualization | Yes | Yes | Not tested | Yes | Yes | Yes |

| Hyper-V fail-over clustering | Yes | No | Not tested | Not tested | Not tested | Not tested |

| Stratus Everrun | Yes | No | Not tested | Not tested | Not tested | Not tested |

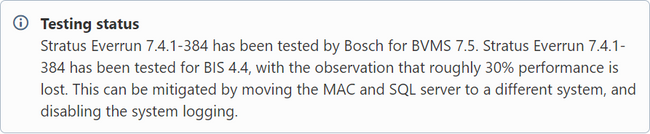

Stratus

Stratus offers Everrun, a solution which focusses on preventing downtime for applications running on the platform.

https://www.stratus.com/solutions/platforms/everrun/

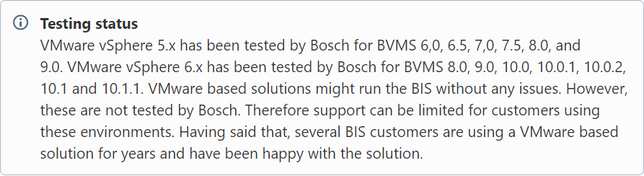

VMware

vSphere

VMware's virtualization solution for server virtualization.

https://www.vmware.com/products/vsphere.html

Horizon

VMware's virtualization solution for desktop virtualization.

https://www.vmware.com/products/desktop-virtualization.html

Workstation

VMware's virtualization solution to run on workstations.

https://www.vmware.com/products/personal-desktop-virtualization.html

Microsoft Hyper-V

Server virtualization

Microsoft's virtualization solution for server virtualization.

https://www.microsoft.com/en-us/cloud-platform/server-virtualization

Client virtualization

Nvidia and Microsoft have partnered up to offer a GPU accelerated, virtualized, desktop experience. More information can be found on the Nvidia and Microsoft websites.

Kernal-based Virtual Machine (KVM)

This is an open-source virtualization solution, maintained by the open-source community. Several commercially available virtualization products are based on KVM.

Xenserver

Xenserver is another open-source virtualization solution, maintained by the open-source community.

Virtualization in the security industry

As most security environments are still disconnected from the IT environment, the security industry mainly used the virtualization concept to increase the reliability of the software used in such environments.

A complex concept

Virtualization products are complex and offer a lot of functionality. Professional training on such a product can easily take a full week. In order to meet and exceed the expectations of its customers Bosch recommends its system installers to outsource the design, set-up and maintenance of such an environment. Alternatively the system installer can apply for local training on the specific platform, or contact the vendor of the platform directly for support. For example, Stratus offers professional services, which includes consulting services.

Licensing

As the virtualization concept is transparent for the operating system and applications, no additional Bosch licenses are required when Bosch products are installed on top of a virtualization platform. Information on the licensing of the virtualization platform itself can be retrieved from the vendor of the platform. The vendor, or one of its certified, local, partners, can offer a custom quotation related to the specific project requirements.

Training

Recommended training courses to connect the dots.

Stratus

Stratus offers several educational services and trainings.

VMware

VMware offers educational services. For VMware vSphere Bosch can recommend looking at the Data Center virtualization courses and certification tracks.

Microsoft

The Microsoft Learning environment offers several basic learning modules on-line. In addition class-room trainings and certification tracks are offered.

Others

For the open-source products loads of information is available on-line. The learning-curve of such solutions is mostly a bit higher, compared to their commercial counterparts.

Frequently asked questions

|

Question

|

Answer

|

|---|---|

| What are the recommended virtual machine performance settings for a specific application? | The datasheets of all Bosch software products contain the minimum system requirements (including the memory and CPU requirements). These requirements relate directly to the performance settings of the virtual machine in which the application is running. If the physical server, on which the Bosch software is running, is overloaded by other virtual machines, response times of the Bosch application might increase and user experience might suffer or problems might appear. |

| Can I use the Bosch management server to virtualize the software? | We do not recommend using our management servers for virtualization. They are specified as management servers to run one single application, and not suitable for virtualization. We recommend a virtualization specialist to evaluate the hardware requirements based on the datasheets of our software. |

| What are the drawbacks of virtualization? | One of the major drawbacks of virtualization is that it increased the complexity of the environment. People managing the environment need to be trained and able to troubleshoot the environment when failures occur. |

| Are virtual environments secure? | As the concept allows system administrators to easily decouple applications it can create an additional layer of security. However, the administrative management layer can also be considered a security drawback. As with any software product, they are as secure as they are configured. |

| How many virtual machines can I run on a single physical server? | That depends on the required performance of the application and the offered performance of the server. If the application requires, for example, a quad-core processor with 8GB of memory, and the physical server offers an octo-core processor with 16GB of memory, two virtual machines which such applications could run on that physical server. |

| Is virtualization beneficial for every environment? | No. The decision to use virtualization should come from the business and usage of the application running on top of the platform. |

| What performance issues can I expect? | Memory and CPU related performance can (for most virtualization products) be easily monitored on a physical level (how much resources are available on the hardware) as well as on a virtual level (how much resources are consumed by the virtual machine). When an external storage device is used (for example, an fiber-channel or iSCSI SAN) storage I/O can become a bottleneck. Storage I/O relates to the throughput (how much data can the system write within a given amount of time) as well as the latency (how long does it take to write a single byte to the hard drives). VMware published two troubleshooting articles (part 1 and part 2) in their blog, which indicates the complexity of Storage I/O related issues. |

Still looking for something?

- Top Results