- Top Results

- Bosch Building Technologies

- Security and Safety Knowledge

- Security: Video

- BVMS - Person Identification Camera Placement Guide

BVMS - Person Identification Camera Placement Guide

- Subscribe to RSS Feed

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Possible causes and solution(s)

-

Causes

This article describes the considerations that need to be taken into account when designing a video surveillance system that is suitable for person identification.

Terms and Abbreviations

|

Product / Service Name

|

Description

|

|---|---|

| BVMS Person Identification powered by AnyVision |

BVMS Person Identification powered by AnyVision allows security system operators to identify persons of interest. Person Identification is a feature expansion of BVMS and supported by BVMS 10.0. |

| DL380 Gen10 AI Server (AI Server) | The DL380 Gen 10 AI Server is specifically designed for AI use cases and comes with a LINUX OS and 2 x P4000 NVIDIA GPUs. The AI Server is capable of analyzing 8 video streams (1080p) simultaneously. |

| Person Identification Device (PID) | Corresponding hardware |

-

Solution

Camera Placement

Field of View

Field of View (FOV) refers to the view of the world that is visible through a camera’s lens and encompasses everything that the camera’s lens sees. Each camera is positioned in a particular location and orientation in space. Objects outside a camera’s FOV are not recorded in a video or photograph. Proper and strategic camera positioning is crucial for capturing usable facial features in a video.

Capturing Facial Features - Mathematical Models

Facial features are represented in AnyVision as a mathematical model (also called a vector). AnyVision creates a mathematical model that represents each face detected in the FOV of a camera video feed. AnyVision also creates a mathematical model of all the faces that are enrolled into the AnyVision system (meaning that they were added as a Person of Interest (POI) to the AnyVision Watchlist). AnyVision can then recognize the appearance of POIs in a video by comparing the mathematical model of all enrolled faces with all the faces detected in the FOV.

While AnyVision is quite powerful, there are some minimal requirements for video capture and camera location/positioning that are necessary in order to enable AnyVision’s artificial-intelligent, neural-network to generate a high-quality mathematical model of people’s facial features. While the AnyVision detector can locate faces that are quite small and that have been recorded in imperfect video capture conditions, the quality of the facial feature mathematical model is degraded when conditions are sub-optimal.

High-Quality Mathematical Models

The following sections describe various aspects for determining the optimal camera locations, angles, FOV and other factors in order to get the best results from your Face Recognition system. The best facial recognition results are achieved by providing the conditions to enable AnyVision to create the highest quality facial mathematical model. High-quality facial mathematical models enhance the precision with which AnyVision recognizes POIs. This results in optimal identification of POIs, optimal representation of faces for future use, less false positives (meaning incorrectly recognizing a person as a POI) and less false negatives (meaning not recognizing a POI in the camera’s FOV).

Video Face Size

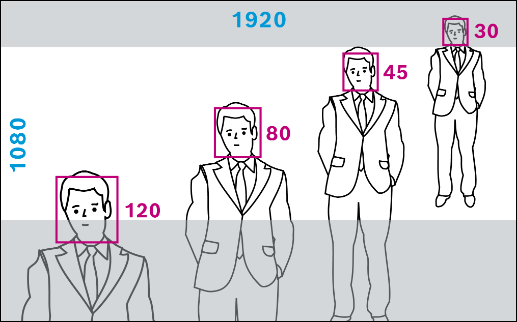

How many pixels does BVMS person identification need?

Generally, high-quality mathematical models can be created from faces that are represented by at least 80 x 80 Pixels Per Face (PPF). Faces of this size enable AnyVision to not only detect that there is a face, but also to recognize a person as being a POI (meaning someone that was added to the Bosch Person Identification Subjectlist). More so, AnyVision provides recognition performance for face sizes of as low as 45 x 45 PPF.

Example: The following is an example of how to calculate the minimum PPM for face recognition, which is 280 PPM (45 PPF).

A typical face is 16 cm wide, which is 16% of a meter. If a camera is going to be set up in a corridor that is 4 m wide, then the number of pixels captured by the camera per meter is one quarter of the entire view width of the camera. For example, a typical Full High Definition (FHD) (2MP) camera resolution is 1080 (height) x 1920 (width) pixels. Therefore, 1920 pixels covers the entire width of the corridor, meaning 1920/4 = 480 pixels per meter. An average face is 16 cm wide, meaning 480 pixels x 16% = 76.8 Pixels Per Face (PPF).

Distance

Faces that are close to the camera appear larger than those that are far away. The following are some general rules of thumb regarding the number of PPMs and PPFs at varying distances from a typical Full High Definition (FHD) (2MP) camera.

Before installing the camera, assess the distance from the camera lens at which the video will capture the best frontal view of faces. Then calculate the camera resolution in order to estimate the FOV width in meters in the zone of interest (where faces are expected to be captured).

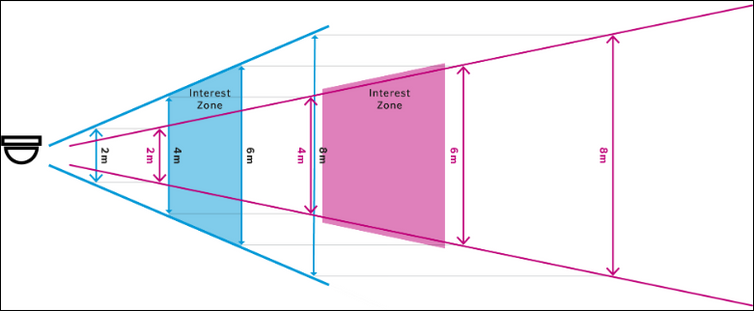

Adjusting camera focal length

When using PTZ or varifocal lenses, it is possible to change the focal length (Zoom) of the camera and to enable face recognition at further distances from the camera. This adjustment narrows the area in the FOV of the camera. The main objective is to change the distance between the camera and the capture point. The width of the capture line never changes as it is directly linked to the number of pixels. The diagram below demonstrates how the distance from the camera changes, but the width of the zone is fixed at 6m. In this area, we capture faces with sizes ranging from 45 x 45 (6.5m width for FHD camera) to 100 x 100 (3m width for FHD camera). The blue below shows a wider zoom and the red a narrower zoom.

The image below also demonstrates that the best facial recognition (the Interests Zone) is achieved closer to the camera (between 4 and 6 m away from the camera) when the zoom is wider, and further from the camera (starting from 8 m away) when the zoom is narrower.

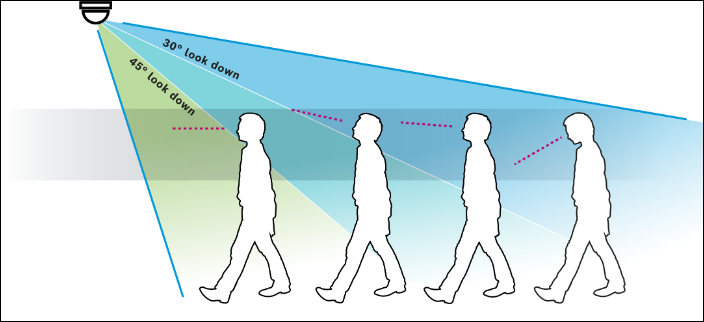

Camera Height and Angle

AnyVision can detect faces with up to 45º angle of interest. However, reliable person identification is achievable when the angle of view is up to 30º. Optimal results are achieved when the camera is positioned at a 0º ‒ 20º angle from the angel of interest. It is possible to decrease the angle of interest by moving the camera further away and using optical zoom or lenses.

The image above demonstrates how it is also important to consider additional factors, such as when people’s faces are tilted downward (descending steps or checking mobile phones). It is necessary to compensate for these factors by directing the angle of the camera. Also, in order to ensure that people wearing caps/hats are captured with maximum performance, the camera should be placed at the shallowest angle while maintaining a clear line of sight to the capture line. The image shows the optimal angle of 30° looked down as a blue area, the yellow area is reasonable and the red area of 45% is more challenging.

Direction of Movement

Frontal view is best achieved in areas of movement

The highest quality mathematical models and recognition results are achieved when cameras are positioned to obtain a frontal view of people’s faces.

This is true even though the system can detect and recognize up to a 90º side view (also called full profile, where the nose is facing sideways).

In real-world environments, people tend to turn and talk to associates, to look at things in their surroundings and so on. Therefore, a best practice is to place cameras so that they are pointed at and focusing on areas where people are moving (walking and so on). While walking, people tend to look forward and may be facing in similar directions. Choke points (where people tend to congregate) may also be applicable. However, people who are not moving, tend to congregate in a cluster and face inwards, which may block recognition angles.

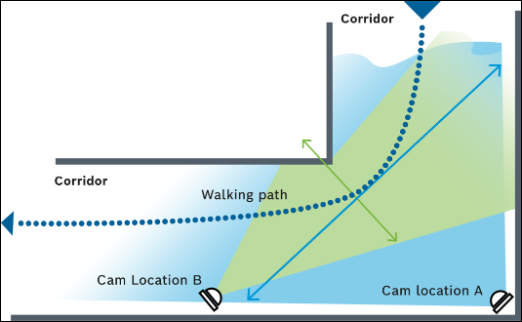

Moving towards the camera

When moving across a camera’s field of view, side profiles are most likely captured. This means there will likely be no increase of the face size (because they’re not coming closer to the camera) and details are blocked.

It is preferable to place cameras where people are moving towards the camera (their faces get larger as they come closer to the camera) rather than crossing the scene, as shown in the diagram below.

The angle of movement may affect video quality as artifacts may appear and faces may be slightly smeared, which lowers the quality of the facial mathematical model. This typically occurs when people are crossing the scene at high speeds, as shown in the picture above on the left.

In the image below, Camera A is located in the corner of a corridor and covers both sides. Considering typical walking paths, Camera A’s faces will be detected in small size (wide angle lens) and in profile view, while Camera B’s placement will typically capture faces that are looking towards the camera direction in a higher PPF. Therefore, Camera B’s location will generate better results.

Psychological Aspects

An interest zone is the area at which the camera should be pointed and focused in order to ensure optimal capture of people’s face (frontal view, sharp image, and so on). This might be in a specific area of a room, building entrance, hallway or so on. When considering the camera’s field of view, various aspects of human psychology and behavior should be taken into account in order to ensure that people are not looking down at the time and place where the camera is recording them. This significantly increases the probability of achieving a high-quality mathematical model.

- Focal points attract attention:

- When a camera is located in an area that attracts attention, such as near a TV screen or an attractive advert, it is more likely that people will look up at it.

- Sounds also attract people’s attention. For example, people tend to look towards speakers during announcements, towards crowded loud areas when passing them, and at screens with sound. This may be considered either as a focal point or a distraction, depending on camera placement.

- New Areas: People typically look down when they enter a new area, such as after going through a gate, or when they need to take special care of the next step (such as when getting on/off an escalator). In this case, the person will look down to find his/her next step and will then look up and forward immediately afterwards.

- Avoiding Areas with Long Walks: In open areas where people walk a long distances, many will concentrate on their mobile devices with their face pointing downwards, which makes it more difficult to get a good facial view.

Camera Focus

In most of the video surveillance camera deployments, the camera is set to auto focus mode. In this case, the camera algorithm will look for sharp angles in the field of view and set the focus according to that location. In many cases, this focuses the camera on patterns on the ground (such as the carpet), a picture on the wall and so on. In many cases, the camera’s focus is not set up to identify a moving face. Because of the fact that the system seeks facial details, it is essential that the focus be set manually so that it will catch a face in the interest zone. It is best to set the maximum focal range, which because it increases the depth of the captured area.

Lens Selection

When planning the Interest Zone, its distance from the camera and the expected field of view width differs according to the various types of lenses that may be used, each which may have a different focal length. It is important to calculate the required focal length for the scene. Using a shorter focal length, widens the field of view, and the interest zone will be closer to the camera.

It is not recommended to use fisheye lenses, which tend to have shorter focal lengths and wider angles. Fisheye lenses also may create a distorted view that impacts the recorded facial quality.

Light

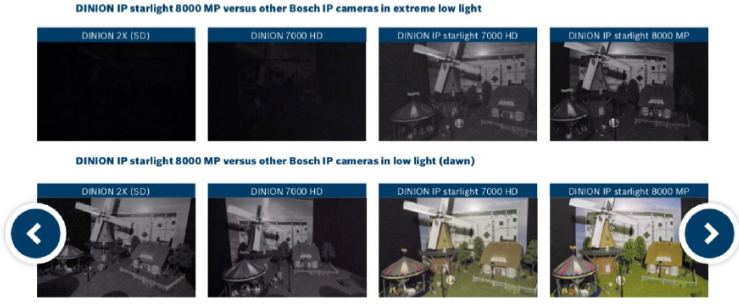

Increasing the exposure of light on faces increases the level of detail the system can acquire on the faces. Different cameras with different setups generate a different image quality, and each has its own fixed lux (lowlight camera performance) level, which cannot be adjusted. Therefore, it is best to select cameras that have the best possible lux.

It is best to use cameras that provide High Dynamic Range (HDR), Wide Dynamic Range (WDR), Image Sensor Sensitivity (ISO) as an essential compensating measure for challenging dim environments.

The picture below shows strong backlight which results in a dark face, making it difficult to distinguish facial details.

Person identification works best in areas with even lighting levels. It is common to find down lighters that create stark shadows directly underneath where people may be standing. In these areas, selecting a capture line preceding the light, with a lower but a more even lighting level, will provide better performance. Mirrors and marble floors can also reflect light, which influences the face’s light level. It is recommended to avoid these settings.

Bit Rate

In many video management systems, a low bit rate is used in order to save video storage. If a low bit is used, when there is a movement in the picture, there may not be enough bit rate budget to encode the video and artifacts. The resulting pixelization will generate low video quality which degrades the face’s mathematical model quality. In order to ensure the best video quality when a person is in the picture, camera settings should be set to Variable Bit Rate (VBR) mode instead of Constant Bit Rate (CBR) mode. You should also consider using camera view areas to maximize the quality for any given bit rate (especially prevalent when padding could be used).

Face Enrollment

When a picture of a person of interest is added to the BVMS Person Identification subjectlist, AnyVision creates a mathematical model of that person’s facial features, which is used as a reference for recognizing that person. This process is called Face Enrollment. After a face has been enrolled, AnyVision can recognize that person when they are in the field of view of any camera configured for Person Identification. A high quality reference picture is critical in order to generate the unique mathematical model that will influence the system results. The following are the requirements for optimal face enrollment:

- Picture in JPG format

- Updated picture

- Face is in focus

- Colored photos

- Balanced light and no shadow

- Picture is not stretched or distorted

- Face size is at least 100 x 100 pixels (picture size of 200 x 200 pixels when the face is at least half of the picture)

- Facing the camera

- The entire face is visible

- Neutral expression and both eyes open

- Without sunglasses

- Single face in the picture

It is most preferable to enroll the highest quality picture of a person of interest possible into the BVMS Person Identification subjectlist. When the reference picture is of lower quality, it is best to use it for manually searching for this person of interest.

Still looking for something?

- Top Results